The Trust Gap: Why the Best AI Lesson is Learning to Question It

Last Tuesday, a high school teacher in Chicago spent her entire lunch break running student essays through an AI detection tool. Three came back flagged. She confronted the students. Two cried. One argued. Later that night, she found out the detector had a 26% false positive rate. She had wasted an hour policing, damaged the trust of three students, and hadn't actually taught anyone a single thing.

This scene is playing out in classrooms everywhere, and it reveals a problem much larger than cheating. When AI first arrived in schools, the instinct was predictable: we had to stop it. Schools bought detection software, and entire faculty meetings became debates about plagiarism. But in the panic, something got lost. The students using AI weren't learning how to think, and the teachers hunting them down weren't teaching them to, either. Both sides were stuck in a loop—one generating answers, the other policing them—and nobody was doing the actual work of education.

The Problem with Asking the Wrong Question

The real question was never whether a student used AI. It was always whether that student could think for themselves. Consider a college freshman named Daniel. Throughout high school, Daniel used AI to help draft essays, summarize readings, and outline arguments. His grades were excellent and his writing was polished. But then he walked into a philosophy seminar where the professor handed out a passage full of subtle logical flaws and asked what was wrong with the argument.

Daniel froze. He had spent four years producing clean outputs, but he had never once practiced tearing an argument apart. The AI had always given him an answer, but it had never taught him to question whether that answer was actually any good. Daniel's story isn't about a bad kid trying to cheat. It's about a gap that opens when we mistake fluency for understanding. AI can make anyone sound articulate, but sounding articulate and being thoughtful are completely different skills.

Why Educators Are Flipping the Assignment

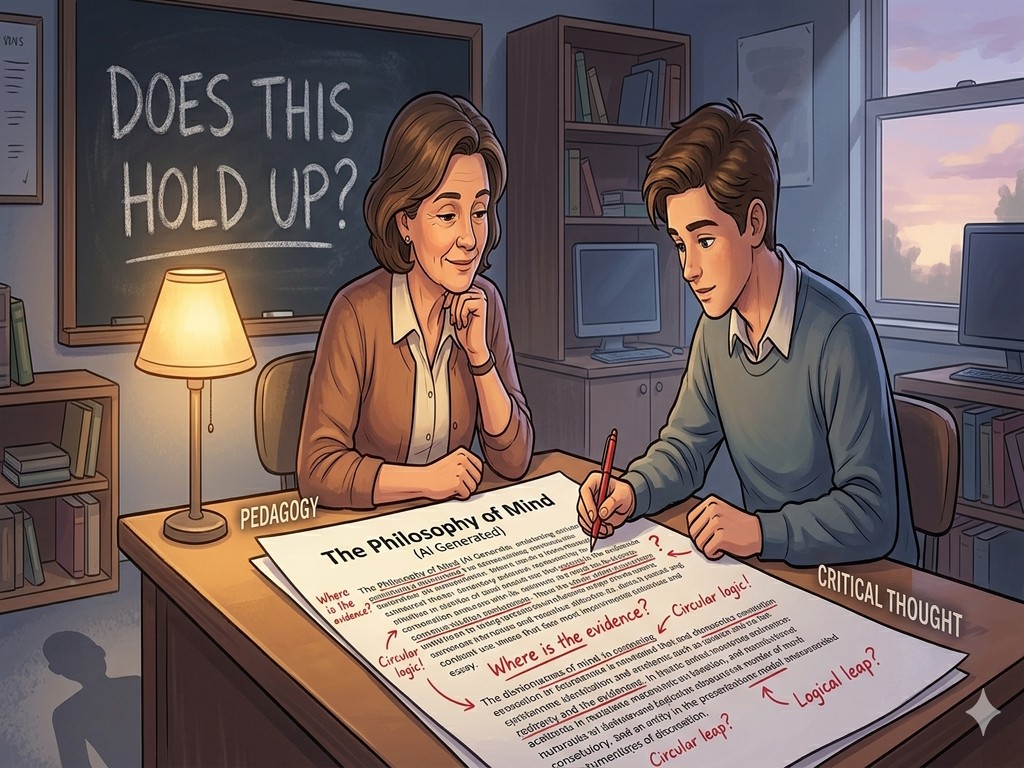

Some educators are figuring this out, and their solution is counterintuitive. Instead of banning AI, they are putting it at the center of the lesson. A teacher named Ms. Rivera in Denver now gives her students an AI-generated essay on climate change and asks them to find three things the machine got wrong. Another professor assigns students to use ChatGPT to build a complex argument, then spends the rest of the week having them dismantle it by identifying weak evidence and missing perspectives.

This shift is subtle but powerful. The AI becomes a sparring partner rather than an answer key. Students stop being passive consumers of an output and start becoming critics of it. That is where the real thinking lives—not in generating the response, but in deciding whether the response holds up under pressure. This is what pedagogy looks like when it stops fighting the algorithm and starts using it as a mirror for thought.

Bridging the Gap for Future Employers

This shift matters far beyond the classroom walls. If you walk into any hiring conversation today, you will hear the same refrain: technical skills can be taught, but we need people who can think. When AI handles the routine tasks—the drafting of emails, the summarizing of reports, the crunching of data—what remains is the hard stuff. Framing the right problem. Spotting what the data doesn't say. Making a judgment call when the evidence is ambiguous.

A student who spent four years interrogating AI-generated arguments enters the workforce knowing how to question assumptions and build an original case. Meanwhile, a student who simply pasted AI answers into their homework walks in knowing only how to prompt a chatbot. The gap between those two people will only widen as the technology improves. Critical thinking is the one skill that doesn't become obsolete when the software updates.

Stepping Out of the Shadow

The algorithm casts a long shadow in education right now. It is easy to get lost in that shadow—chasing detectors, rewriting syllabi, and arguing about what counts as original work. But the teachers who are getting it right have stopped staring at the shadow and turned to face the light. They are asking what kind of thinker they want their students to become, and then they are designing every assignment to build that specific capacity.

The conversation was never really about the algorithm. It was always about the student standing in front of it.