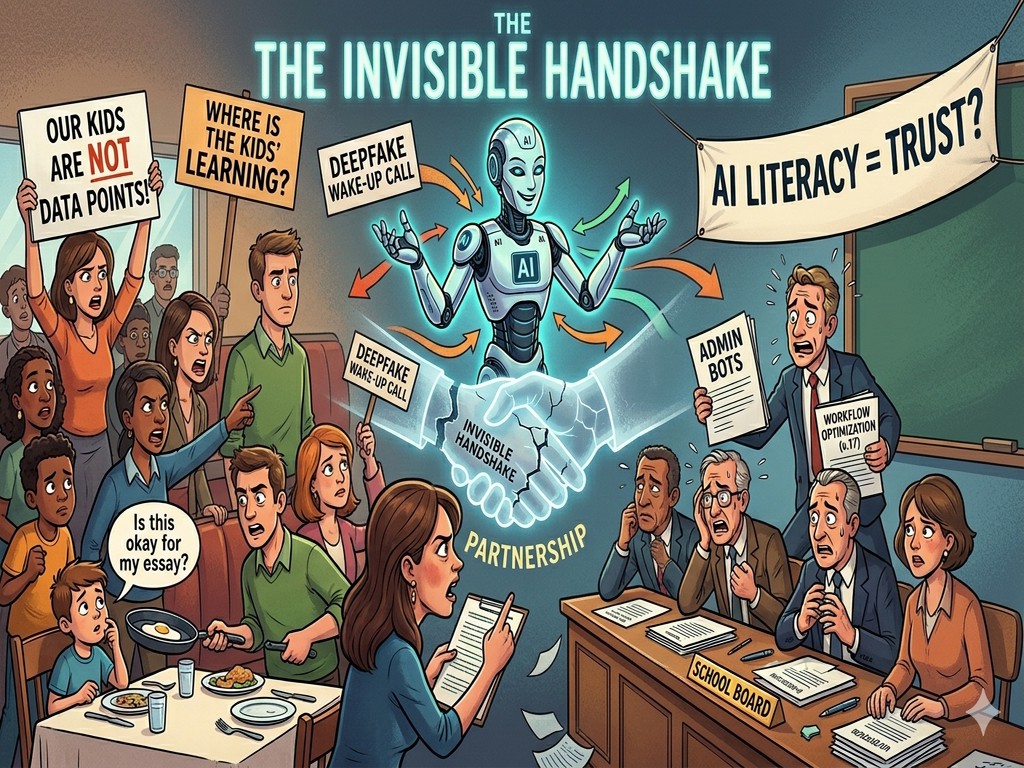

The Invisible Handshake: Why AI in Schools is Failing the Trust Test

Imagine a handshake. It is the silent, sacred agreement that has governed education for generations. A parent stands at the school gates and hands over their child's future, trusting that the school will provide the tools for success. In return, the school promises transparency, safety, and a seat at the table. For decades, this "Invisible Handshake" was the bedrock of the community.

But lately, there is a new guest at the table, and it didn't come through the front door. It arrived via a late-night software update, a viral TikTok trend, and a series of "Black Box" algorithms. It's called Artificial Intelligence. And for many parents, that firm handshake is starting to feel like a cold, closed fist.

The Midnight Phone Call that Changed Everything

The story of AI in education isn't usually written in a lab; it's written in the living rooms of panicked families. Take the case of Radnor High School in Pennsylvania. It didn't start with a revolutionary new personalized tutor or a breakthrough in grading efficiency. It started with a nightmare.

Parents began receiving frantic calls. Their children's faces—their real, recognizable faces—had been harvested from social media and grafted onto explicit, AI-generated images by classmates. It was a digital violation that felt viscerally real.

The ensuing outrage wasn't just directed at the students who prompted the software. The fury was aimed at the school's silence. When the parents demanded answers, they found a void. There was no policy. There was no "Safety 101" for the generative age. The school was playing catch-up while the families were already in crisis. This is what we call the Radnor Pattern: schools wait for the car to crash before they decide to install seatbelts. When a parent is the last to know that a life-changing technology has entered the classroom, trust doesn't just bend—it shatters.

When "Efficiency" Becomes a Wall

A few hundred miles away in New York City, a different kind of story was unfolding. When the Department of Education began rolling out its AI roadmap, they did what most large organizations do: they focused on the "how." They talked about "optimizing teacher workflows," "administrative scalability," and "streamlining data entry."

To a bureaucrat, this sounds like progress. To a parent, it sounds like their child is being turned into a data point.

During public forums, parents pushed back with a simple, stinging question: "Where are the kids in this plan?" They weren't "anti-technology" or luddites wanting to return to the era of the abacus. They were simply asking why the policy read like a corporate merger rather than an educational philosophy.

By focusing on "efficiency," the school system inadvertently signaled that AI was an internal IT tool—like a new printer or a better Wi-Fi router. But AI isn't a tool; it's a teammate. It's a teammate that changes how a child learns to write, how they solve problems, and how they perceive truth. When you hire a new "teammate" for a child and don't invite the parents to the interview, you aren't being efficient—you're being exclusionary.

The Literacy Trap: Why Knowing "How" Isn't Enough

In response to this growing anxiety, many districts have pivoted to a new buzzword: AI Literacy. The logic is simple: if we teach students and parents how a Large Language Model works—if we explain the math, the tokens, and the neural networks—the fear will evaporate.

But here is the hard truth that many educators miss: You cannot code your way out of a trust deficit.

Imagine a school tells a parent, "Don't worry, we've taught your daughter the ethics of data scraping." That sounds great until that same daughter is wrongly accused of cheating because an "AI Detector" (which is notoriously unreliable) gave her an 80% "probability" of being a bot.

In that moment, knowing how the math works doesn't help. What matters is the human relationship. Does the teacher trust the student's voice? Does the parent trust the school's disciplinary process? Literacy without a foundation of trust is just a manual for a machine that no one actually wants to use. True literacy isn't about shared vocabulary; it's about shared values.

The Schools That Dared to Be Vulnerable

If the story ended here, it would be a tragedy. But across the country, a new narrative is emerging from schools that are getting it right. These aren't necessarily the wealthiest districts or the ones with "AI Labs." They are the ones that had the hardest conversations first.

In some districts, the "Invisible Handshake" is being replaced by a "Visible Partnership." Instead of drafting a policy behind closed doors and "announcing" it via a PDF in an email blast, these schools held "AI Town Halls" before a single piece of software was purchased.

They did something radical: they admitted they didn't have all the answers.

Teachers in these districts began practicing "Visible AI." Instead of banning the tool or using it in secret to grade papers, they brought the AI to the front of the class. They showed the students how it hallucinates. They showed them where it gets facts wrong. They invited parents to sit in on these lessons, effectively saying, "We are exploring this new world together."

When a teacher is vulnerable enough to say, "The AI told me this, but I think it's wrong—what do you think?", they build more credibility than a 50-page handbook ever could. They move from being a "delivery system for information" to a "guide for wisdom."

The New Handshake

The future of AI in education will not be decided by Google, OpenAI, or any Silicon Valley giant. It will be decided in the quiet spaces: in school board meetings, in parent-teacher conferences, and around the dinner table when a child asks, "Is it okay if I use this to help with my essay?"

The AI Bridge Foundation believes that the "Bridge" isn't made of fiber-optic cables or silicon chips. It is made of conversation.

If we want AI to be a tool for equity and empowerment, we have to start by repairing the handshake. Schools must move from transparency after implementation to transparency during inspiration. Parents must move from a posture of "defense" to one of "design."

Because at the end of the day, the technology might be "Nano" or "Mega," but the stakes are entirely human. It's time to stop treating AI as a secret and start treating it as a shared journey. Only then can we ensure that the hand we are shaking belongs to someone we actually trust.